Some thoughts on the "post-persuasion" information environment

What if everyone believes everyone is full of shit?

Hello friends. It’s been a minute! The last real post for this newsletter was May of 2025. Today, after a lengthy hiatus, I’m writing on account of three things:

I wanted to share some thoughts on the current environment, specifically as it relates to online information and how we interact with ~truth~;

I wanted to share what I’ve been up to (especially for those who are email-only subscribers and don’t engage on the Substack app); and

I have a humble request!

1. Some thoughts

I have found that the degree of fervor reflected in the online world does not reflect the state of our country at the ground level. Perhaps this has always been true to some extent, but it seems more true today than in times past.

I think most rational people in the real world would agree — even if some won’t say it — that our country is being run by a corrupt lunatic surrounded by sycophants and demagogical neophytes. Put more softly and simply, that Trump is a bad president and his administration is chock full of unqualified loyalists. I doubt most people would be taken aback by such a characterization of the current administration, regardless of their politics or who they voted for in 2024. And, at the very least, few people can argue that the outcomes of the current administration’s policies have been good for the country.

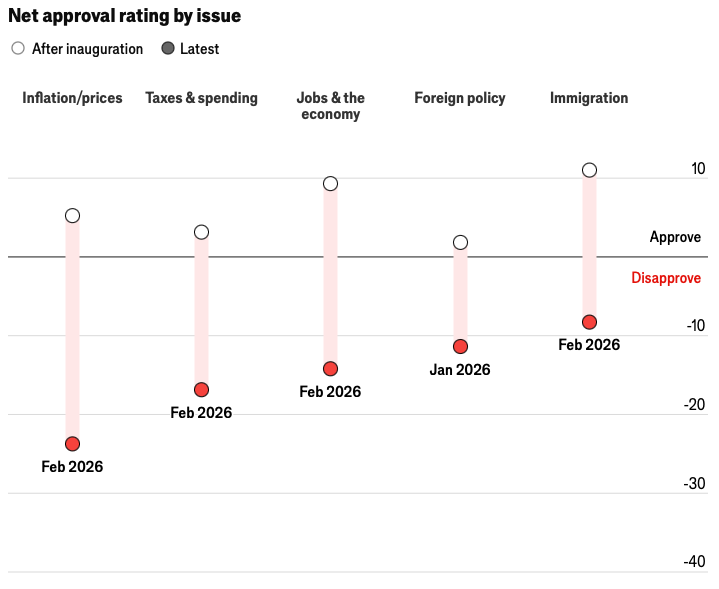

Recent polling data would suggest as much. Not only is Trump under water on every major issue that won him the election…

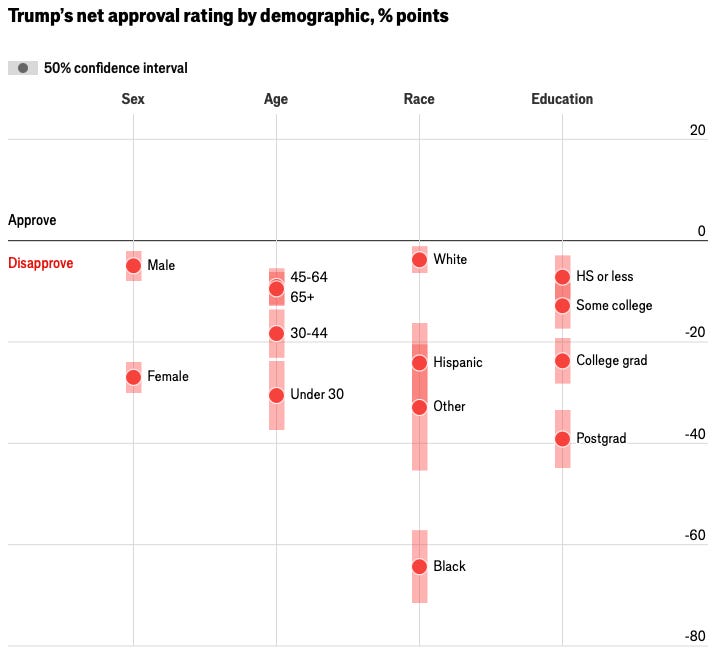

The demographic splits are no less damning…

With such indiscriminate disapproval, shouldn’t it follow logically that people are on edge in real life? We’ve certainly seen flashes of social rupture like, for example, in Minneapolis. But the recent ICE outrage and even the ‘No Kings’ protests last summer seemed benign compared to the national turmoil during the Summer of Floyd or in the wake of Jan. 6. I think the difference boils down to more recent shifts in how people are interacting with and internalizing information.

At a basic level, managing stress is part of staying functional. Evolutionarily, parasympathetic nervous system regulation was core to human survival. Those who could systematically down-regulate threat responses were able to conserve energy and maintain social bonds. In times of social or political turbulence, functional individuals preserve their cognitive capacity by hunkering down and exercising agency over their own thought patterns. They compartmentalize anxiety-inducing information — especially based on whether such information is actionable — in order to redirect scarce mental resources towards getting the things that need to get done, done.1

Despite the insanity of the current political climate in the US, I haven’t seen much evidence of people in the real world losing their shit like they were in 2020-21. And despite everyone collectively recognizing that online political discourse has become utterly schizophrenic, people seem relatively sane and grounded IRL. This apparent chasm between lived and observed realities is curious. Are we collectively more well-adjusted now? Are we better at compartmentalizing? Have we just become desensitized? Is it that Covid did a number on people’s mental health and stability?

One explanation of the chasm could be headline whiplash. Many postulated the existence of this phenomenon in financial markets vis-á-vis Trump’s erratic tariff rhetoric, especially in the months following Liberation Day. After a certain point, new tariff announcements by Trump ceased to induce volatility in markets. Some chalked up this surprising lack of price reaction to sheer investor exhaustion. Shock therapy, if you will.

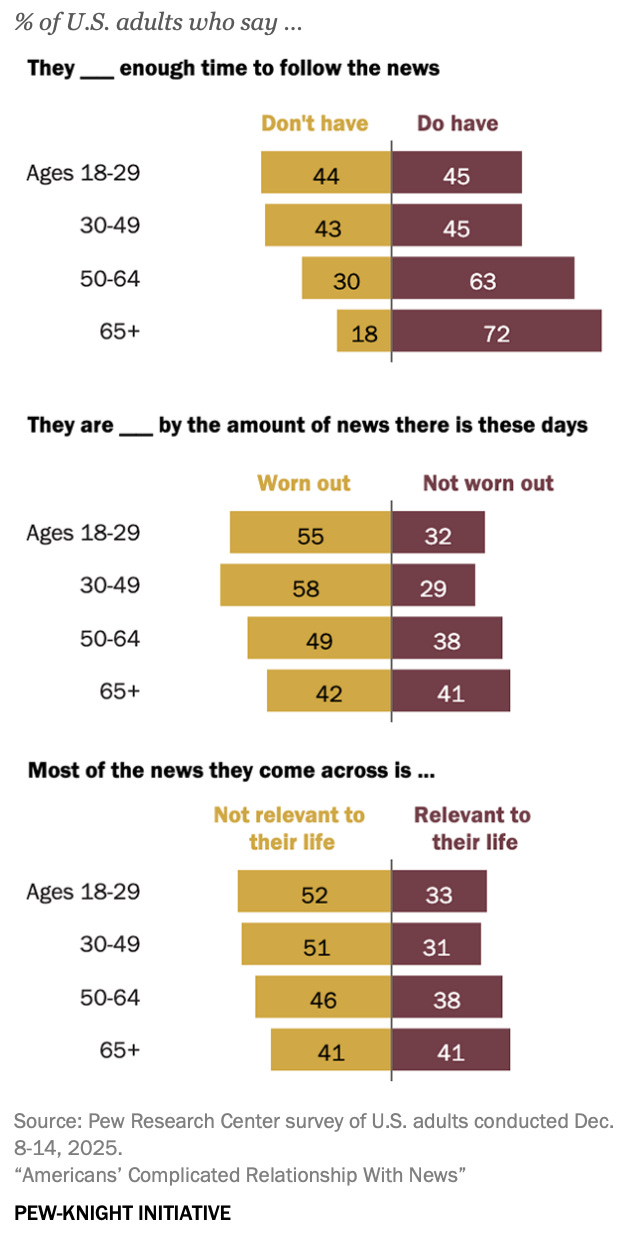

Another explanation, though, is that perhaps people have chosen to narrow the aperture of their informational intake. With information now flowing in such abundance, maybe we’re increasingly forced to pick and choose which issues to stay current on. It does seem to have become relatively fashionable in Gen-Z/Millennial social circles to “delete the apps” — to touch grass, read a book, or join a run club in lieu of continuing to tap the digital dopamine spigots like Hinge or Instagram or TikTok or X (pick your poison, really).

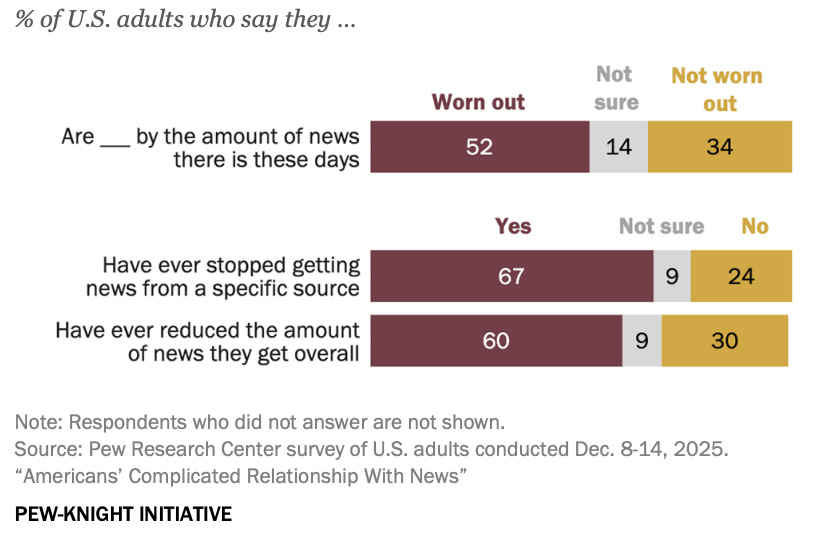

This trend of “tuning out” could also be manifesting in changing news habits. In the sense that engaging with political news is functionally the same kind of dopamine drip — and is often routed through the same kinds of high-affect, high-identity channels — I think more people have grown wary of even participating in political talk in favor of preserving their own sanity (not to mention, potentially, their reputation).

A recent Pew survey shows that about half of Americans say they are worn out by news, and many have tuned out:

And younger adults in particular are more likely to cite obstacles to following the news:

This, provided it’s also the trend with respect to political news, is, in my view, a healthy correction. “Talking politics” — what some political scientists have described as “political hobbyism” — has become too much of a recreational sport and is way too online.

However the other, darker, side of the coin is that as this happens — as the less dysfunctional people log off — online narratives become increasingly shaped by a smaller yet more high-arousal cohort. Those who remain engaged — the “terminally online” — are afforded more narrative control. And so, on the whole, the information environment grows substantively more extreme and unmoored.

Herein lies the paradox: normal people logging off makes the internet worse.

Algorithms overweight the people who are most motivated to speak, and then further rewards the speech behavior that keeps others engaged. We know that political posting is heavily concentrated among a small share of users, and research suggests that moral-emotional and out-group-focused content is systematically rewarded with greater spread. Humans revel in rage and the system optimizes for this. As more normal people opt out of this digital doom loop, the remaining discourse becomes both more rigid and more radical.

Who, then, can be relied on to widen the context window and/or generate a more nuanced online information environment? We typically lend credence to expert viewpoints because their claims are presumed to be battle-tested or in some fashion institutionally vouched-for.2 The experts themselves are incentivized to make their work visible and this desire leads them to cultivate an online presence. That’s all fine and good.

But with the audience they reach tending to increasingly skew toward higher-engagement, higher-frequency users3 — a dogmatic and cognitively-motivated cohort — nuance is dead on arrival. Meanwhile the discerning people who are well-reasoned thinkers and thus more open to nuance — the very people who are more likely to have “deleted the apps”4 — are absent from the online conversation altogether. The net result is almost definitionally a deterioration in the quality of online information. The environment turns degenerative.

This is not to say that the viewpoint of some enraged online commenter is inherently wrong. Neither is it to say that every Joe Rogan take is misinformed, nor that every single one of Tucker Carlson’s podcast guests is a kook. Alex Jones actually got a few things right. Robert Reich has had a prescient take or two. And despite his numerous high-conviction predictions that were just flat out wrong, Paul Krugman’s academic work fundamentally advanced economists’ thinking on international trade.

But the credulous tendencies (Rogan) and patently outlandish espousals (Tucker), not to mention the partisan hackery (Reich and Krugman), have justifiably served to tarnish the credibility of all of the above narrative peddlers — along with their peers with whom they are conflated, fairly or not. They, and many others like them, simply aren’t be trusted as good stewards of information. This isn’t a value judgment per se, nor is the distrust irrational. It’s simply the case that, in Bayesian terms, credibility matters just as much as credence.5

Bayes’ theorem tangent: what if everyone believes everyone is full of shit?

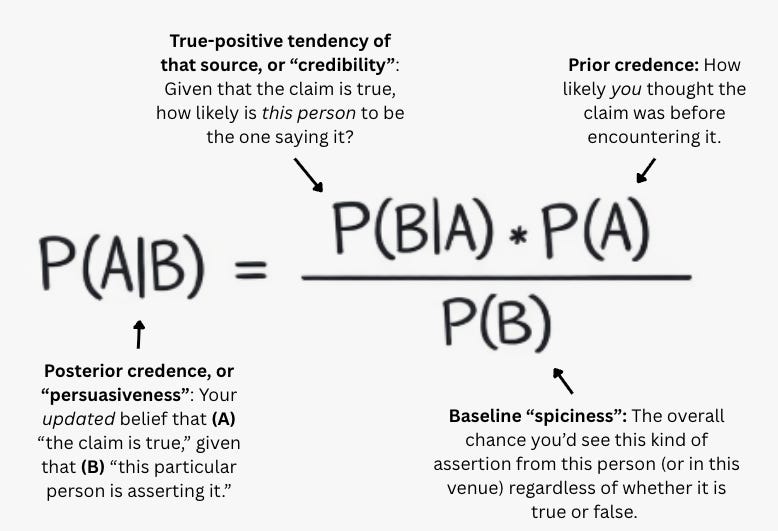

Whether you should believe a claim to be true — let’s call it the “persuasiveness” of some purported truth — is, according to Bayes’ theorem, an empirical question with three moving parts:

Credibility: Who is the claim is being articulated by, and how has that source’s claims fared over time?

Credence: How likely is the claim to be true, independent of who is articulating it?

Spiciness: How unlikely is the claim to have been claimed, regardless of whether it’s true or false?

Think of it this way. In a low-trust environment, “credibility” becomes a scarce and valuable commodity. Instead of “if it’s true, how likely is this person to be the one saying it?” being the baseline, “if it’s false, how likely are they to say it anyway?” becomes the baseline. This is how you would approach an environment if you perceive everyone in it to be totally full of shit: “Credibility,” or P(B|A), takes on the low value of what P(B|not A) would typically be in a high-trust environment.

In such a low-trust environment, updates to beliefs stop happening — basically persuasion stalls out unless a given claim is blatantly true and mundane.

To that end, heavy is the burden of any nameless, faceless commenter these days to prove they aren’t full of shit. A good Bayesian might actually presume a given comment section is full of shit until proven otherwise because comment sections are constantly filled with spicy, outlandish takes from behind profiles with esoteric avatars and zero established credibility. Regardless of a claim’s actual basis in fact, the behaviors of these countless schizophrenic “reply guys” have invariably muddied the waters for everyone. (Ask yourself: when was the last time something you encountered in a comment section genuinely caused you to change your mind?)

So, with bad stewards of truth at the top of the informational food pyramid, and with the remaining participants in online discourse either perceiving everyone else as insane and/or wrong, being insane and/or wrong themselves, or both, everyone begins to behave as though the truth isn’t even worth solving for. In other words, if we go into every online interaction believing that others have low credibility while we simultaneously encounter claims that are spicy or insane, our baseline presumption becomes that every claim we see is a hallucination with no basis in reality. How can we even begin to map our beliefs to some shared objective truth in such an environment? What basic incentive even is there to be truthful?

Obviously I’m taking this whole dynamic to its limit, but I think it’s directionally accurate. I think many would agree that, increasingly, the most fundamentally sound heuristic for engaging online — yes, even on our beloved Substack — is to be deeply skeptical of everything you come across. This is tiresome, and moreover those who are heterodox thinkers or are good at being skeptical are probably the ones most likely to have a decent job worth prioritizing in real life. They’re probably the ones most likely to have checked out and stopped participating altogether. They’re probably reading books and are the subscribers who read a newsletter via email instead of an app.

What we’re left with is a cesspool of online discourse where trust is low and people have calcified belief systems, rarely, if ever, updating their priors. Indeed, what we’re left with is a “post-persuasion” information environment: persuasion isn’t literally impossible, but the conditions for good-faith belief-updating — shared facts, shared credibility heuristics, shared incentives — are absent.

I’m not the first to frame the current online milieu in this manner. And I’m certainly not the most qualified to speak on these issues. Nor do I have answers or solutions. But I believe “post-persuasion” is the correct frame. And it aligns with my “anecdata” over the past few years. The academic philosopher Dan Williams is a good source on these topics and I’d encourage readers to check out his work. In his own words:

The deep question of social epistemology—the genuine puzzle—is not why people hold false beliefs. It is why people sometimes form true beliefs.

[…]

In complex, modern societies, the relationship between reality and our representations of reality—between what Lippmann called the “real environment” and the “pseudo-environments” that make up our mental models of the real environment—is heavily mediated by complex chains of trust, testimony, and interpretation.

Consider for a moment how absurd it is that this “genuine puzzle” has seemingly become more puzzling at a time when the informational exchange rate has never been higher. In a world where anyone can know virtually anything at any time, how is it that the epistemic environment has gotten demonstrably shittier? Dan, if you’re reading this, please tell me!

Finally, some may be tempted to argue that AI uptake will drive better informational hygiene over time. The pushback I’d offer on this is that the LLM context window — the cache of “artificial” intelligence — is derived from human canon in the first place. The promise of “AGI” is that LLMs will ultimately be able to expand the context window endogenously, independent of advances in human-derived intelligence. According to OpenAI CEO Sam Altman, “AGI” is right around the corner. Color me hopeful yet deeply skeptical of that claim.

2. An update

If you don’t like the current state of the information environment, you can do something about it. To quote a 4th grade classroom poster, you can “Be the change you want to see in the world.” But in all seriousness, you really can just do things.

The original intent of this newsletter was, broadly, to share “intel” on the world from a human perspective and, hopefully, a perspective oriented around truth discernment. That is, Truth with a capital-T. Truth as an object, an ideal. Truth as something to be sought and solved for. Truth as something that is falsifiable, conditional on new information coming to light. Truth as a North Star.

If this sounds fake and gay aspirational and naive that’s because it is. Throughout much of history, being socially ostracized meant certain death. We humans are therefore basically programmed to signal in-group alliance above all else. So truth-seeking, as it were, is an unnatural state of being. Moreover it’s an open philosophical debate whether some material objective truth even exists in the first place, much less whether some guy with a newsletter on Substack has the capacity to be its arbiter. Scott Alexander is probably the closest thing we’ve seen such an arbiter, and I’ll be the first to admit that I’m no Scott Alexander.

At any rate, I eventually pivoted to almost exclusively writing this newsletter on topics that I do have some expertise on: financial markets and macroeconomics. I stopped in May of last year because, well, idk, my heart wasn’t in it like it was at the beginning. I love talking markets and macro, but my interests are broader these days and it felt like I had pigeon-holed myself. Also, I’d been working on a side project…

New Western (NW), a consortium of a few dozen Substackers with a shared vision of epistemic purity, was launched last year by Peter Banks and myself to iterate on these aspirations. The NW Discord community remains highly active, and maybe someday we’ll actually do something with the publication as we originally intended. In the meantime, shoot me a DM if you’d like to join the Discord. We’re always looking to add new members who are heterodox thinkers, open-minded, and conscientious.

But just as Peter and I were starting to move on that project, a new opportunity arose — ADHD vibes, I know — and we both chose to join The Boyd Institute, a future-oriented policy lab (i.e., think tank) founded by tech/media entrepreneur Jeff Giesea. (Peter joined in August 2025 and I shortly thereafter.)

We’re really pleased with the work we did on housing last quarter — establishing “ground truths,” convening with academics, activists, contrarians, and other subject matter experts — having ultimately planted our flag on policies designed to support the cohort with, in our estimation, the highest economic multiplier effects: young, middle-income, family-forming households.

This quarter we’re focused on solutions to address the following question: How can America improve its problem-solving capacity? We’re also running an essay contest through the middle of March with a top prize of $2,500! More details here if you’re curious:

Anyway, that’s what I’ve been up to. I’m bullish on the future of Boyd — it’s exciting to be working on a nonpartisan project alongside people who share a fundamental belief in free market dynamism. We hope to reorient policy discussions around coming up with bold, asymmetric ideas to solve the existential problems facing Americans today. Subscribe here if you’re interested in following along:

3. A request

Finally, I’ll wrap this up with a bit of personal stuff and a humble request. I’ve been running for a few years now, on and off. I’d never really done it competitively, but more as a solitary practice to maintain physical and mental health. However I’ve recently decided to get more serious.

On February 15th, I’ll be racing in the Austin Half Marathon. I signed up for this race in light of very unfortunate circumstances: my beloved Aunt Marie passed away in December after battling pancreatic cancer for years, and I was already planning on being in Austin to attend her funeral the same weekend of the race.

After signing up for the race, it dawned on me that I could use this opportunity to raise money towards pancreatic cancer prevention in my Aunt Marie’s honor. So I did some digging and decided to partner with Project Purple, who will be sponsoring me in the race!

One reason Marie was able to fight as long as she did is that her pancreatic cancer was caught early through routine imaging after a previous rare cancer. That early detection likely gave her years she otherwise would not have had. It also made early detection, patient support, and better treatment outcomes — pillars of Project Purple's mission — deeply personal to my family.

So far we’ve been able to raise nearly $1K but there’s plenty of time left to donate. My request is that you kindly consider helping me reach my goal of raising $2,100 ($100 for each kilometer in the race). And if you’re not able to help today, please share the link to the donation page → HERE.

Your donation will also:

Be tax-deductible(!)

Literally help save and extend human lives

Make you feel good about yourself

Make me run faster

(Additionally, if you’d like to donate but wish to remain anonymous, please reach out to me and I can accommodate.)

Cheers, and thanks for reading this far. I’m not sure when I’ll be posting again, but until next time, let’s all try to be Good Samaritans and Good Bayesians! ;-)

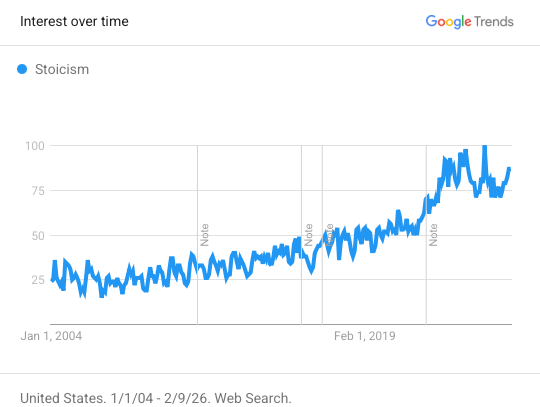

After all, Marcus Aurelius wrote Meditations while serving as a commander-emperor, overseeing years of exhausting frontier wars. It is both deeply ironic and entirely fitting that the most famous text about inward calm wasn’t written by a monk, but by a man personally responsible for war, death, plague response, and the survival of a civilization.

Also, is anyone surprised that interest in stoicism has increased nearly threefold since mid-2016?

Nevermind that trust in the intelligentsa has also eroded over time. It’s an important development, but let’s for now assume that trust in the “experts” and the “science” remains high.

Users who are either themselves terminally online and insane, or users who tune in to observe the insanity out of cynical curiosity, purely for anthropological entertainment purposes, not to ascertain ground truths or meaningfully contribute to the discussion.

In the same way that the partner you’re really looking for on Hinge isn’t actually on Hinge, the most rational political discussions probably happen on walks in the park, not in the reply sections online.

Just as a person’s credibility matters in terms of whether they should be taken at face value, so, too, do preconceived notions of the medium of exchange (i.e., the platform) matter. Admittedly I am biased against taking anyone serious who exclusively posts on Bluesky (or Truth Social for that matter).

I’d view X in the same light and once did, except for my having actively disengaged with ridiculous content whereby the algorithm finally picked up on the fact that I am there to engage with Serious Content only. But it can be fickle — I can sense this sort of algorithmic entropy towards my feed being muddied with toxic and chaotic content the moment I let my guard down and don’t swiftly recognize, and ignore(!), an unserious tweet. It requires an exhausting level of vigilance.

This is a great article. Chapeau

"The original intent of this newsletter was, broadly, to share “intel” on the world from a human perspective and, hopefully, a perspective oriented around truth discernment. That is, Truth with a capital-T. Truth as an object, an ideal. Truth as something to be sought and solved for. Truth as something that is falsifiable, conditional on new information coming to light. Truth as a North Star."

Some things I have read recently that relate to this:

https://open.substack.com/pub/qualiaadvocate/p/postmodernism-for-stem-types-a-clear?utm_campaign=post-expanded-share&utm_medium=web

https://slatestarcodex.com/2018/01/24/conflict-vs-mistake/

I'm still trying to wrap my mind around how to think about this. I can understand how "the effect of a message is more important than the truth of a message" is a rational position to take, especially if there's a chance (well, certainty) that others will take that tack.

But I can't shake the feeling that "conflict theory," that the truth value of a message is irrelevant, is (for lack of a better term) evil. From the first essay:

"This doesn’t mean conflict theory is always correct - it means it’s correct for mapping conflict arenas. When you’re actually in a cooperative space focused on building accurate models, mistake theory applies. But Twitter isn’t that space, and pretending it is doesn’t just make you vulnerable - it makes you fake."

But why isn't Twitter that space? There's no reason for Twitter to be a conflict space rather than a collaborative space. The only reason Twitter is a combat rather than collaborative space is because people expect it to be.

I feel like the only virtuous course of action is to act as if (almost) everywhere is a collaborative space where truth is paramount. Yes, there may be a subjective aspect as to which truths you broadcast, but you can back up those decisions with other truths. Other people may act in bad faith, may prioritize impact over truth; other people will also steal. That doesn't give you permission to steal. The truest version of our values, of our morality, is how we act when others do not abide by them. If you'll abandon your commitment to truth just because other do, why should I believe anything you say?

... this is why I'm not a politician.